« Back to Publications list

What? That’s Not a Chair!: How Robot Informational Errors Affect Children’s Trust Towards Robots

Download Publication File

Abstract

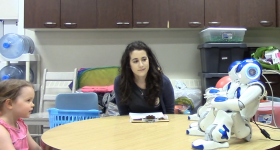

Robots that interact with children are becoming more common, in places such as child care and hospital environments. Such robots may mistakenly provide nonsensical information, or have mechanical malfunctions, yet we know little of how these robot errors are perceived by children, and how they impact trust. This is particularly important when robots provide children with information or instructions, such as in education or health care. Drawing inspiration from established psychology literature investigating how children trust people who teach or provide them with information (human informants), we designed and conducted an experiment to examine how robot errors impact how young children (3-5 years old) trust robots. Our results suggest that children use their understanding of people to develop their theory of mind of robots, and use this to determine how to interact with robots. Specifically, we found that children developed their trust model of a robot based on the robot’s previous errors, similar to how they would for a person. We however failed to replicate with robots other prior findings. Our results provide insight into the theory of mind of children as young as 3 years old, and how they perceive robot errors and develop trust.

Video

Citation

Geiskkovitch, D. Y., Thiessen, R., Young, J. E., Glenwright, M. R. (2019). What? That’s not a chair!: How robot informational errors affect children’s trust towards robots. In Proceedings of the 14th ACM/IEEE International Conference of Human-Robot Interaction.

Authors

Denise Geiskkovitch

Alumni

Raquel Thiessen

PhD Student

James E.Young

ProfessorAs well as: Melanie R. Glenwright