« Back to Publications list

GyroWand: An Approach to IMU-Based Raycasting for Augmented Reality

Abstract

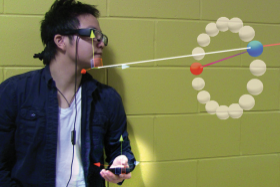

Optical see-through head-mounted displays (OST-HMDs), such as Epson’s Moverio (www.epson.com/moverio) and Microsoft’s Hololens (www.microsoft.com/microsoft -hololens), enable augmented reality (AR) applications that display virtual objects overlaid on the real world. At the core of this new generation of devices are low-cost tracking technologies using techniques such as marker-based tracking, simultaneous localization and mapping (SLAM), and dead reckoning based on inertial measurement units (IMUs), which allow us to interpret users’ motion in the real world in relation to the virtual content for the purposes of navigation and interaction. These tracking technologies enable AR applications in mobile settings at an affordable price. The advantages of pervasive tracking come at the cost of limiting interaction possibilities, however. Off-the-shelf HMDs still depend on peripherals such as handheld touch pads and other peripherals. Mid-air gestures and other natural user interfaces (NUIs) offer an alternative to peripherals but are limited to interacting with content relatively close to the user (direct manipulation) and are prone to tracking errors and arm fatigue. Raycasting, an interaction technique widely explored in traditional virtual reality (VR), is another alternative for interaction in AR.6 Raycasting generally requires absolute tracking of a handheld controller (known as a wand), but the limited tracking capabilities of novel AR devices make it difficult to track a controller’s location. In this article, we introduce a raycasting technique for AR HMDs. Our approach is to devise a way to provide raycasting based only on the orientation of a handheld controller. In principle, the rotation of a handheld controller cannot be used directly to determine the direction of the ray as a result of intrinsic problems with IMUs, such as magnetic interference and sensor drift. Moreover, a user’s movement in space creates a situation in which the virtual content and ray direction are often not aligned with the HMD’s ¬ eld of view (FOV). To address these challenges we introduce GyroWand, a raycasting technique for AR HMDs using IMU rotational data from a handheld controller. GyroWand’s fundamental design differs from traditional raycasting in four ways:

■ interprets IMU rotational data using a state machine, which includes anchor, active, out-of-sight, and disambiguation states;

■ compensates for drift and interference by taking the orientation of the handheld controller as the initial rotation (zero) when starting an interaction;

■ initiates raycasting from any spatial coordinate (such as a chin or shoulder); and

■ provides three disambiguation methods: Lock and Drag, Lock and Twist, and AutoTwist.

This article is a condensed version of a paper presented at the 2015 ACM Symposium on Spatial User Interaction. Here we focus on our design choices and their implications and refer the reader to the full paper for the experimental results.

Citation

J. D. Hincapié-Ramos, K. Özacar, P. P. Irani and Y. Kitamura.2016. GyroWand: An Approach to IMU-Based Raycasting for Augmented Reality. In IEEE Computer Graphics and Applications, vol. 36, no. 2, pp. 90-96, Mar.-Apr. 2016.

Authors

Pourang Irani

ProfessorCanada Research Chair

at University of British Columbia Okanagan Campus

As well as: K. Özacar and Y. Kitamura