« Back to Projects list

Interactive Detail-in-Context Using Two Pan-and-Tilt Cameras in Teleoperation

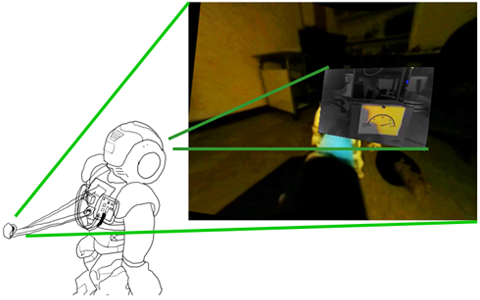

Robot teleoperation, such as for search and rescue, uses multiple specialized cameras (e.g., wide environmental and sharp narrow views) to aid in task awareness. Simple display techniques, such as tiling, require ongoing mental mapping be-tween the views; cameras that pan or tilt exacerbate the problem as the inter-view relationship changes. The detail-in-context technique bypasses this mental mapping requirement by providing a single integrated feed showing all cameras, with detail overlaid within the context. However, how this can be adapted to for robot teleoperation with multiple pan-and-tilt cameras has not yet been demonstrated. We present Monocle, an interactive detail-in-context teleoperation interface that integrates a pan-and-tilt narrow-angle first-person view into a wide-angle behind-robot view; operators can move the Monocle around a scene to obtain more resolution where needed. Evaluation results demonstrate Monocle’s feasibility and show that it can help operators complete search and rescue tasks more effectively in comparison to simple solutions.

Video

Project Publications

Novel Egocentric Robot Teleoperation Interfaces for Search and Rescue (2021)

Stela H. Seo. Novel Egocentric Robot Teleoperation Interfaces for Search and Rescue. Ph.D. Thesis (2021). University of Manitoba, Canada.

Monocle

Stela H. Seo, Daniel J. Rea, Joel Wiebe, James E. Young. "Monocle: interactive detail-in-context using two pan-and-tilt cameras to improve teleoperation effectiveness," IEEE International Symposium on Robot and Human Interactive Communication, RO-MAN, 2017. Lisbon, Portugal.