« Back to Projects list

Playing the ‘Trust Game’ with Robots: Social Strategies and Experiences

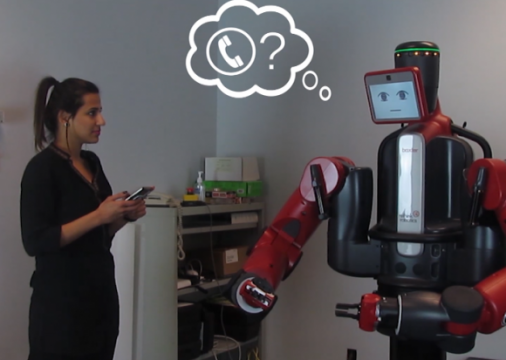

We present the results of a pilot study that investigates if and how people judge the trustworthiness of a robot during social Human-Robot Interaction (sHRI). Current research in sHRI has observed that people tend to interact with robots socially. However, results from neuroscience suggests people use different cognitive mechanisms interacting with robots than they do with humans, leading to a debate about whether people truly perceive robots as social entities. Our paper focuses on one aspect of this debate, by examining trustworthiness between people and robots using behavioral economics Trust Game scenario. Our pilot study replicates a trust game scenario, where a person invests money with a robot trustee in hopes they will receive a larger sum (trusting the robot to give more back), then gets a chance to invest once more. Our qualitative analysis of investing behavior and interviews with participants suggests that people may follow a human-robot (h-r) trust model that is quite similar to the human-human trust model. Our results also suggest a possible resolution to the sHRI and Neuroscience debate: people try to interact socially with robots, but due to lack of common social cues, they draw from social experience, or create new experiences by actively exploring the robot behavior.

Video

Project Publications

Playing the ‘Trust Game’ with Robots: Social Strategies and Experiences

Roberta C. Ramos Mota, Daniel J. Rea, Anna Le Tran, James E. Young, Ehud Sharlin and Mario C. Sousa. "Playing the 'Trust Game' with Robots: Social Strategies and Experiences," Robot and Human Interactive Communication (RO-MAN). 2016. New York, USA.

Collaborators

As well as: Roberta C. Ramos Mota, Anna Le Tran, Ehud Sharlin, Mario C. Sousa